Oracle Database has been the backbone of enterprise data management for decades. Its PL/SQL language, a procedural extension of SQL, powers millions of stored procedures, packages, triggers, and functions across every industry. These PL/SQL codebases represent enormous investments in business logic, data validation rules, and ETL processes. But Oracle's licensing model, one of the most expensive in the database industry, is driving organizations to re-evaluate their database platform strategy. As enterprises pursue cloud modernization, Google BigQuery presents a fundamentally different architecture that eliminates the cost and complexity of Oracle while providing superior analytical performance at scale.

Migrating from Oracle PL/SQL to BigQuery is not a simple lift-and-shift. Oracle and BigQuery have different execution models, different SQL dialects, and different approaches to procedural logic. Oracle excels at row-level procedural processing with cursor loops, exception handlers, and imperative control flow. BigQuery excels at set-based analytical processing with columnar storage, distributed execution, and declarative SQL. A successful migration requires understanding both platforms deeply and applying systematic translation patterns that preserve business logic while leveraging BigQuery's strengths. This guide provides the comprehensive technical reference for that translation.

Why Oracle Teams Are Moving to BigQuery

The Licensing Pressure

Oracle's licensing model is notoriously complex and expensive. Enterprise Edition licenses can cost $47,500 per processor, with additional charges for options like Partitioning ($11,500 per processor), Advanced Compression ($11,500 per processor), and Real Application Clusters ($23,000 per processor). For a typical enterprise running Oracle across multiple environments, the annual licensing and support costs can reach millions of dollars. Oracle's audit practices add another dimension of risk, as organizations frequently discover they are out of compliance with licensing terms during Oracle's rigorous audit process.

BigQuery eliminates this entire licensing paradigm. There are no per-processor fees, no option charges, and no compliance audits. BigQuery charges for storage (approximately $0.02 per GB per month for active storage) and for compute (on-demand pricing of $6.25 per TB processed, or flat-rate slots starting at $2,000 per month for 100 slots). For most analytical workloads, BigQuery's total cost of ownership is 40-70% lower than Oracle, even before accounting for the infrastructure, DBA staffing, and operational costs that Oracle requires.

Cloud Modernization and Serverless Architecture

Oracle Database requires significant infrastructure management. Whether running on-premises or on Oracle Cloud Infrastructure, organizations must provision compute instances, manage storage volumes, configure Real Application Clusters for high availability, tune memory parameters, apply security patches, and maintain backup and recovery systems. Each Oracle instance requires dedicated DBA attention for performance tuning, space management, and version upgrades.

BigQuery operates on a completely different model. There is no infrastructure to manage, no instances to provision, and no database administration overhead. BigQuery automatically handles storage allocation, query optimization, data distribution, high availability, security patching, and version upgrades. This serverless model frees database teams to focus on data modeling, business logic, and analytics rather than infrastructure operations.

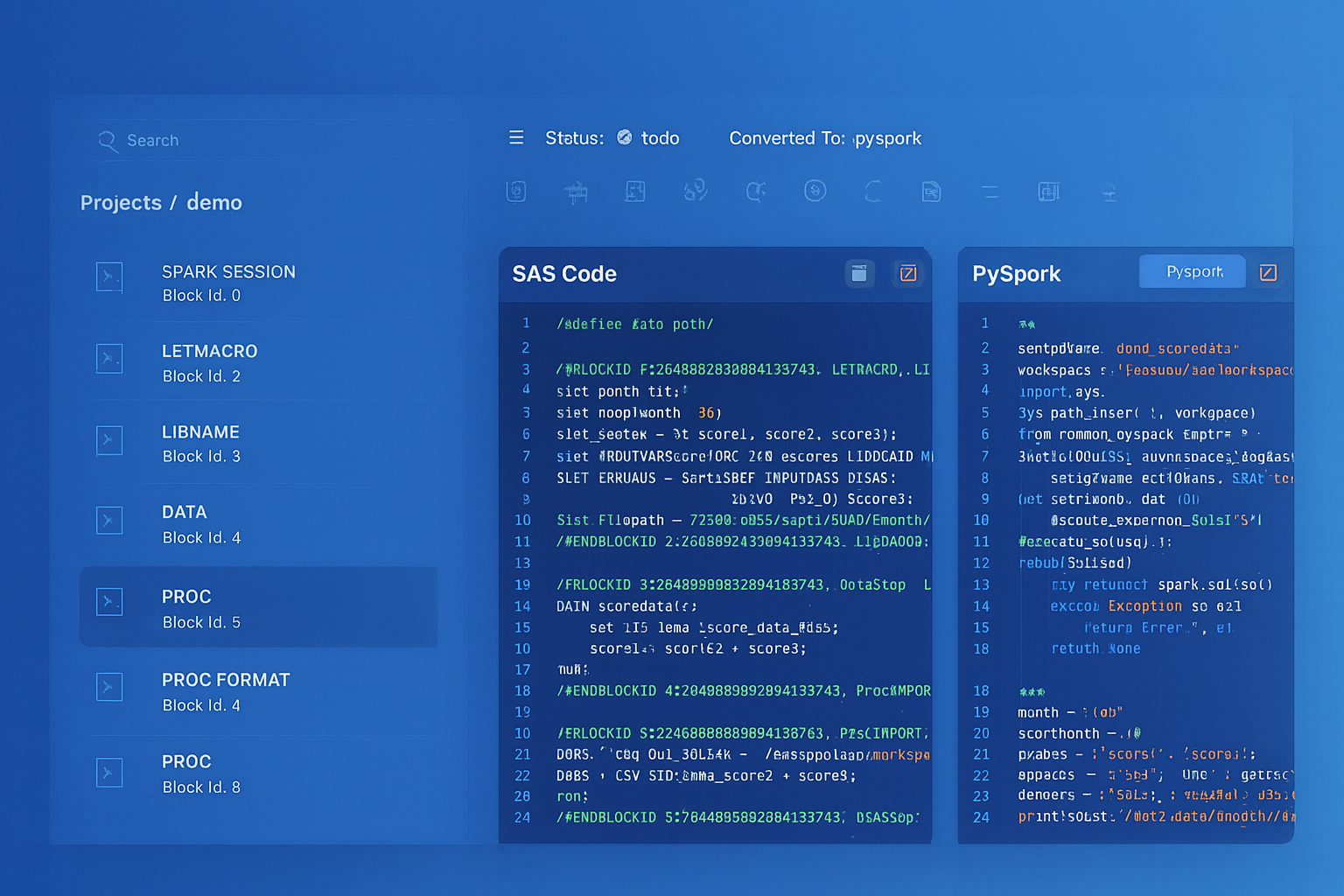

Oracle PL/SQL to BigQuery migration — automated end-to-end by MigryX

Architecture Comparison: Oracle vs. BigQuery

Oracle Database is a general-purpose relational database management system built around a shared-everything architecture. A single Oracle instance manages both storage and compute, with the SGA (System Global Area) providing shared memory for caching, sorting, and session management. PL/SQL procedures execute within the database server process, operating on data through cursors, bulk operations, and row-level processing.

BigQuery separates storage and compute entirely. Data is stored in Google's Capacitor columnar format across a distributed file system (Colossus). Query execution happens in a separate compute layer (Dremel) that provisions resources dynamically for each query. This separation means that storage costs remain constant regardless of compute usage, and compute resources scale elastically based on query complexity. There is no equivalent of Oracle's SGA because there is no persistent server process to manage.

This architectural difference has profound implications for PL/SQL migration. Oracle procedures that rely on session-level state (package variables, global temporary tables, cursor caching) need to be redesigned for BigQuery's stateless execution model. Oracle's row-level cursor processing needs to be rewritten as set-based SQL operations. And Oracle's trigger-based event handling needs to be replaced with BigQuery's event-driven architecture using Pub/Sub and Cloud Functions.

MigryX: Purpose-Built Parsers for Every Legacy Technology

MigryX does not rely on generic text matching or regex-based parsing. For every supported legacy technology, MigryX has built a dedicated Abstract Syntax Tree (AST) parser that understands the full grammar and semantics of that platform. This means MigryX captures not just what the code does, but why — understanding implicit behaviors, default settings, and platform-specific quirks that generic tools miss entirely.

Comprehensive Construct Mapping Table

The following table provides the complete mapping between Oracle PL/SQL constructs and their BigQuery equivalents. This serves as the primary reference during migration planning and execution.

| Oracle PL/SQL Construct | BigQuery Equivalent | Migration Notes |

|---|---|---|

| Stored Procedures | BigQuery Stored Procedures | BigQuery supports CREATE PROCEDURE with scripting syntax |

| Packages | Datasets with grouped procedures | Package procedures become individual procedures in a shared dataset |

| Package Variables | DECLARE in scripting / temp tables | Session state replaced by script-scoped variables or temp tables |

| Triggers | Pub/Sub + Cloud Functions | No native triggers; event-driven patterns via GCP services |

| Sequences | BigQuery sequences / GENERATE_UUID() | Use CREATE SEQUENCE or GENERATE_UUID() for surrogate keys |

| Materialized Views | BigQuery materialized views | BigQuery auto-refreshes; syntax differences in creation |

| DBMS_SCHEDULER | Cloud Scheduler + Cloud Composer | Scheduled jobs become Cloud Scheduler triggers or Composer DAGs |

| UTL_FILE | GCS via Cloud Functions | File I/O replaced by GCS object operations |

| BULK COLLECT | ARRAY_AGG / set-based queries | Bulk operations unnecessary in BigQuery's set-based model |

| CONNECT BY | Recursive CTE (WITH RECURSIVE) | Hierarchical queries use standard recursive common table expressions |

| DECODE() | CASE expression | Direct syntactic translation |

| NVL() | COALESCE() or IFNULL() | COALESCE supports multiple arguments; IFNULL is two-argument |

| NVL2() | IF(expr IS NOT NULL, val1, val2) | Use IF expression or CASE |

| ROWNUM | ROW_NUMBER() OVER() | Must use window function; no implicit row numbering |

| SYSDATE | CURRENT_TIMESTAMP() | Returns TIMESTAMP; use CURRENT_DATE() for date-only |

| TO_DATE() | PARSE_DATE() / PARSE_TIMESTAMP() | Format strings differ (Oracle 'YYYY-MM-DD' vs BigQuery '%Y-%m-%d') |

| TO_CHAR() | FORMAT_DATE() / FORMAT_TIMESTAMP() | Format strings require translation |

| LISTAGG() | STRING_AGG() | Syntax: STRING_AGG(col, delimiter ORDER BY col) |

| DUAL | (no table needed) | BigQuery allows SELECT without FROM |

| MINUS | EXCEPT DISTINCT | Direct keyword replacement |

| ROWID | No equivalent (use primary key) | BigQuery has no physical row identifier |

| DBMS_OUTPUT.PUT_LINE | SELECT for debugging / Cloud Logging | Script debugging uses SELECT statements or logging |

| EXCEPTION WHEN | BEGIN...EXCEPTION in scripting | BigQuery scripting supports exception handling blocks |

| CURSOR FOR LOOP | FOR...IN (scripting) or set-based SQL | Prefer set-based rewrites; scripting FOR loops available if needed |

| %ROWTYPE / %TYPE | No equivalent | Use explicit column types in variable declarations |

| MERGE (UPSERT) | MERGE | BigQuery supports MERGE with minor syntax differences |

| Global Temporary Tables | Temp tables (CREATE TEMP TABLE) | Session-scoped; automatically dropped after script/session ends |

| PL/SQL Records | STRUCT type | Composite types become STRUCT fields |

| PL/SQL Tables (associative arrays) | ARRAY type | Index-by tables become BigQuery ARRAYs |

| REF CURSOR | Not supported | Replace with temp tables or result sets |

| AUTONOMOUS_TRANSACTION | Separate script execution | No autonomous transactions; use separate procedure calls |

Function-by-Function Translation Reference

Oracle's built-in function library is extensive, with over 300 documented functions across categories. The following sections provide the detailed mapping for the most commonly used functions organized by category.

String Functions

| Oracle Function | BigQuery Function | Example |

|---|---|---|

| SUBSTR(str, pos, len) | SUBSTR(str, pos, len) | Same syntax, 1-based indexing |

| INSTR(str, substr) | STRPOS(str, substr) | Returns 0 if not found (Oracle returns 0 too) |

| LENGTH(str) | LENGTH(str) | Identical behavior |

| UPPER(str) / LOWER(str) | UPPER(str) / LOWER(str) | Identical behavior |

| TRIM(str) | TRIM(str) | Identical behavior |

| LTRIM(str) / RTRIM(str) | LTRIM(str) / RTRIM(str) | Identical behavior |

| LPAD(str, len, pad) | LPAD(str, len, pad) | Identical behavior |

| RPAD(str, len, pad) | RPAD(str, len, pad) | Identical behavior |

| REPLACE(str, old, new) | REPLACE(str, old, new) | Identical behavior |

| TRANSLATE(str, from, to) | TRANSLATE(str, from, to) | Identical behavior |

| REGEXP_REPLACE(str, pat, rep) | REGEXP_REPLACE(str, pat, rep) | Regex syntax may differ slightly |

| REGEXP_SUBSTR(str, pat) | REGEXP_EXTRACT(str, pat) | Function name change |

| INITCAP(str) | INITCAP(str) | Identical behavior |

| CONCAT(a, b) | CONCAT(a, b) | BigQuery CONCAT supports multiple args |

| || (concatenation) | CONCAT() or || | BigQuery supports || operator |

| ASCII(char) | TO_CODE_POINTS(char)[OFFSET(0)] | Different approach in BigQuery |

| CHR(n) | CODE_POINTS_TO_STRING([n]) | Different approach in BigQuery |

Date and Timestamp Functions

| Oracle Function | BigQuery Function | Notes |

|---|---|---|

| SYSDATE | CURRENT_DATE() or CURRENT_TIMESTAMP() | Choose based on needed precision |

| SYSTIMESTAMP | CURRENT_TIMESTAMP() | Direct replacement |

| ADD_MONTHS(date, n) | DATE_ADD(date, INTERVAL n MONTH) | Syntax change |

| MONTHS_BETWEEN(d1, d2) | DATE_DIFF(d1, d2, MONTH) | Returns integer in BigQuery |

| LAST_DAY(date) | LAST_DAY(date) | Identical behavior |

| NEXT_DAY(date, day) | DATE_ADD(date, INTERVAL ... DAY) with logic | No direct equivalent; calculate manually |

| TRUNC(date) | DATE_TRUNC(date, DAY) | Different syntax; TRUNC to day |

| TRUNC(date, 'MM') | DATE_TRUNC(date, MONTH) | Granularity specified differently |

| EXTRACT(YEAR FROM date) | EXTRACT(YEAR FROM date) | Identical syntax |

| TO_DATE('str', 'fmt') | PARSE_DATE('fmt', 'str') | Argument order reversed; format strings differ |

| TO_CHAR(date, 'fmt') | FORMAT_DATE('fmt', date) | Argument order reversed; format strings differ |

| TO_TIMESTAMP('str', 'fmt') | PARSE_TIMESTAMP('fmt', 'str') | Argument order reversed |

| date1 - date2 (days) | DATE_DIFF(date1, date2, DAY) | Oracle returns number; BigQuery uses function |

| date + n (add days) | DATE_ADD(date, INTERVAL n DAY) | Oracle uses arithmetic; BigQuery uses function |

Numeric and Math Functions

| Oracle Function | BigQuery Function | Notes |

|---|---|---|

| ROUND(n, d) | ROUND(n, d) | Identical behavior |

| TRUNC(n, d) | TRUNC(n, d) | Identical behavior |

| CEIL(n) / FLOOR(n) | CEIL(n) / FLOOR(n) | Identical behavior |

| MOD(n, m) | MOD(n, m) | Identical behavior |

| ABS(n) | ABS(n) | Identical behavior |

| POWER(n, e) | POWER(n, e) or POW(n, e) | BigQuery supports both names |

| SQRT(n) | SQRT(n) | Identical behavior |

| SIGN(n) | SIGN(n) | Identical behavior |

| LOG(base, n) | LOG(n, base) | Argument order reversed |

| LN(n) | LN(n) | Identical behavior |

| EXP(n) | EXP(n) | Identical behavior |

Conversion and Conditional Functions

| Oracle Function | BigQuery Function | Notes |

|---|---|---|

| TO_NUMBER(str) | CAST(str AS NUMERIC) or SAFE_CAST | SAFE_CAST returns NULL on failure instead of error |

| TO_CHAR(num) | CAST(num AS STRING) | For simple conversion; FORMAT for formatting |

| CAST(expr AS type) | CAST(expr AS type) | Type names may differ (NUMBER vs NUMERIC) |

| DECODE(col, v1, r1, ...) | CASE col WHEN v1 THEN r1 ... END | DECODE becomes searched or simple CASE |

| NVL(a, b) | IFNULL(a, b) or COALESCE(a, b) | COALESCE for multi-argument null handling |

| NVL2(a, b, c) | IF(a IS NOT NULL, b, c) | No direct equivalent; use IF expression |

| NULLIF(a, b) | NULLIF(a, b) | Identical behavior |

| GREATEST(a, b, ...) | GREATEST(a, b, ...) | Identical behavior |

| LEAST(a, b, ...) | LEAST(a, b, ...) | Identical behavior |

| CASE WHEN ... THEN ... END | CASE WHEN ... THEN ... END | Identical syntax |

From parsed legacy code to production-ready modern equivalents — MigryX automates the entire conversion pipeline

From Legacy Complexity to Modern Clarity with MigryX

Legacy ETL platforms encode business logic in visual workflows, proprietary XML formats, and platform-specific constructs that are opaque to standard analysis tools. MigryX’s deep parsers crack open these proprietary formats and extract the underlying data transformations, business rules, and data flows. The result is complete transparency into what your legacy code actually does — often revealing undocumented logic that even the original developers had forgotten.

Code Examples: PL/SQL Procedure to BigQuery Procedure

Example 1: Customer Aggregation Procedure

Consider an Oracle PL/SQL procedure that calculates monthly customer revenue summaries, using cursor loops, exception handling, and sequence-based ID generation:

-- Oracle PL/SQL

CREATE OR REPLACE PROCEDURE calc_monthly_revenue(

p_year IN NUMBER,

p_month IN NUMBER

) AS

v_summary_id NUMBER;

v_total_count NUMBER := 0;

CURSOR c_customers IS

SELECT customer_id,

customer_name,

NVL(region, 'Unknown') AS region

FROM customers

WHERE is_active = 1;

BEGIN

-- Clear existing data for the period

DELETE FROM monthly_revenue_summary

WHERE revenue_year = p_year AND revenue_month = p_month;

FOR rec IN c_customers LOOP

SELECT revenue_summary_seq.NEXTVAL INTO v_summary_id FROM DUAL;

INSERT INTO monthly_revenue_summary (

summary_id, customer_id, customer_name, region,

revenue_year, revenue_month, total_revenue,

order_count, avg_order_value, created_date

)

SELECT

v_summary_id,

rec.customer_id,

rec.customer_name,

rec.region,

p_year,

p_month,

NVL(SUM(o.amount), 0),

COUNT(o.order_id),

NVL(ROUND(AVG(o.amount), 2), 0),

SYSDATE

FROM orders o

WHERE o.customer_id = rec.customer_id

AND EXTRACT(YEAR FROM o.order_date) = p_year

AND EXTRACT(MONTH FROM o.order_date) = p_month;

v_total_count := v_total_count + 1;

END LOOP;

DBMS_OUTPUT.PUT_LINE('Processed ' || TO_CHAR(v_total_count) || ' customers');

COMMIT;

EXCEPTION

WHEN OTHERS THEN

ROLLBACK;

DBMS_OUTPUT.PUT_LINE('Error: ' || SQLERRM);

RAISE;

END calc_monthly_revenue;

This procedure translates to BigQuery as a set-based operation, eliminating the cursor loop entirely:

-- BigQuery Stored Procedure

CREATE OR REPLACE PROCEDURE `project.analytics.calc_monthly_revenue`(

p_year INT64,

p_month INT64

)

BEGIN

-- Clear existing data for the period

DELETE FROM `project.analytics.monthly_revenue_summary`

WHERE revenue_year = p_year AND revenue_month = p_month;

-- Set-based insert replaces cursor loop

INSERT INTO `project.analytics.monthly_revenue_summary` (

summary_id, customer_id, customer_name, region,

revenue_year, revenue_month, total_revenue,

order_count, avg_order_value, created_date

)

SELECT

GENERATE_UUID() AS summary_id,

c.customer_id,

c.customer_name,

IFNULL(c.region, 'Unknown') AS region,

p_year,

p_month,

IFNULL(SUM(o.amount), 0) AS total_revenue,

COUNT(o.order_id) AS order_count,

IFNULL(ROUND(AVG(o.amount), 2), 0) AS avg_order_value,

CURRENT_TIMESTAMP() AS created_date

FROM `project.raw.customers` c

LEFT JOIN `project.raw.orders` o

ON c.customer_id = o.customer_id

AND EXTRACT(YEAR FROM o.order_date) = p_year

AND EXTRACT(MONTH FROM o.order_date) = p_month

WHERE c.is_active = 1

GROUP BY c.customer_id, c.customer_name, c.region;

END;

Key transformation: The cursor loop iterating over individual customers and inserting one row at a time is replaced by a single set-based INSERT...SELECT with a LEFT JOIN. This leverages BigQuery's distributed execution engine for massively parallel processing instead of Oracle's serial cursor iteration.

Example 2: Hierarchical Query with CONNECT BY

Oracle's CONNECT BY syntax for hierarchical queries is one of the most common constructs requiring translation. Here is an Oracle query building an organizational hierarchy:

-- Oracle: Hierarchical query SELECT employee_id, employee_name, manager_id, LEVEL AS depth, SYS_CONNECT_BY_PATH(employee_name, ' > ') AS hierarchy_path, CONNECT_BY_ISLEAF AS is_leaf FROM employees START WITH manager_id IS NULL CONNECT BY PRIOR employee_id = manager_id ORDER SIBLINGS BY employee_name;

The BigQuery equivalent uses a recursive CTE:

-- BigQuery: Recursive CTE

WITH RECURSIVE org_hierarchy AS (

-- Anchor: top-level employees (no manager)

SELECT

employee_id,

employee_name,

manager_id,

1 AS depth,

CONCAT(' > ', employee_name) AS hierarchy_path

FROM `project.hr.employees`

WHERE manager_id IS NULL

UNION ALL

-- Recursive: join children to parents

SELECT

e.employee_id,

e.employee_name,

e.manager_id,

h.depth + 1 AS depth,

CONCAT(h.hierarchy_path, ' > ', e.employee_name) AS hierarchy_path

FROM `project.hr.employees` e

INNER JOIN org_hierarchy h

ON e.manager_id = h.employee_id

)

SELECT

o.employee_id,

o.employee_name,

o.manager_id,

o.depth,

o.hierarchy_path,

CASE

WHEN NOT EXISTS (

SELECT 1 FROM `project.hr.employees` e2

WHERE e2.manager_id = o.employee_id

) THEN 1 ELSE 0

END AS is_leaf

FROM org_hierarchy o

ORDER BY hierarchy_path;

Example 3: Package Translation

Oracle packages bundle related procedures, functions, and variables into a single namespace. BigQuery has no direct package construct, but the pattern translates to a dataset containing related procedures and functions. Consider an Oracle package for customer data management:

-- Oracle Package Specification

CREATE OR REPLACE PACKAGE pkg_customer_mgmt AS

g_default_status VARCHAR2(10) := 'ACTIVE';

FUNCTION get_customer_tier(p_revenue NUMBER) RETURN VARCHAR2;

PROCEDURE update_customer_status(p_customer_id NUMBER, p_status VARCHAR2);

PROCEDURE archive_inactive_customers(p_cutoff_date DATE);

END pkg_customer_mgmt;

-- Oracle Package Body

CREATE OR REPLACE PACKAGE BODY pkg_customer_mgmt AS

FUNCTION get_customer_tier(p_revenue NUMBER) RETURN VARCHAR2 IS

BEGIN

RETURN CASE

WHEN p_revenue >= 1000000 THEN 'PLATINUM'

WHEN p_revenue >= 500000 THEN 'GOLD'

WHEN p_revenue >= 100000 THEN 'SILVER'

ELSE 'BRONZE'

END;

END get_customer_tier;

PROCEDURE update_customer_status(p_customer_id NUMBER, p_status VARCHAR2) IS

BEGIN

UPDATE customers SET status = p_status, updated_date = SYSDATE

WHERE customer_id = p_customer_id;

COMMIT;

END update_customer_status;

PROCEDURE archive_inactive_customers(p_cutoff_date DATE) IS

BEGIN

INSERT INTO customers_archive

SELECT c.*, SYSDATE AS archived_date

FROM customers c

WHERE c.last_activity_date < p_cutoff_date

AND c.status = 'INACTIVE';

DELETE FROM customers

WHERE last_activity_date < p_cutoff_date

AND status = 'INACTIVE';

COMMIT;

END archive_inactive_customers;

END pkg_customer_mgmt;

In BigQuery, this package becomes a dataset (customer_mgmt) with individual procedures and functions:

-- BigQuery: Function (replaces package function)

CREATE OR REPLACE FUNCTION `project.customer_mgmt.get_customer_tier`(

p_revenue NUMERIC

) RETURNS STRING AS (

CASE

WHEN p_revenue >= 1000000 THEN 'PLATINUM'

WHEN p_revenue >= 500000 THEN 'GOLD'

WHEN p_revenue >= 100000 THEN 'SILVER'

ELSE 'BRONZE'

END

);

-- BigQuery: Procedure (replaces package procedure)

CREATE OR REPLACE PROCEDURE `project.customer_mgmt.update_customer_status`(

p_customer_id INT64,

p_status STRING

)

BEGIN

UPDATE `project.raw.customers`

SET status = p_status, updated_date = CURRENT_TIMESTAMP()

WHERE customer_id = p_customer_id;

END;

-- BigQuery: Procedure (replaces archive procedure)

CREATE OR REPLACE PROCEDURE `project.customer_mgmt.archive_inactive_customers`(

p_cutoff_date DATE

)

BEGIN

INSERT INTO `project.archive.customers_archive`

SELECT c.*, CURRENT_TIMESTAMP() AS archived_date

FROM `project.raw.customers` c

WHERE c.last_activity_date < p_cutoff_date

AND c.status = 'INACTIVE';

DELETE FROM `project.raw.customers`

WHERE last_activity_date < p_cutoff_date

AND status = 'INACTIVE';

END;

Handling Oracle-Specific Patterns

Replacing Triggers with Event-Driven Architecture

Oracle triggers execute automatically in response to DML events (INSERT, UPDATE, DELETE) on tables. BigQuery does not support triggers natively. The migration pattern replaces triggers with an event-driven architecture using Cloud Pub/Sub and Cloud Functions. When data changes need to trigger downstream actions, BigQuery's change data capture capabilities combined with Pub/Sub notifications provide an equivalent mechanism. For audit logging, which is one of the most common trigger use cases, BigQuery's built-in INFORMATION_SCHEMA views and Cloud Audit Logs provide comprehensive change tracking without custom triggers.

Replacing UTL_FILE with GCS Operations

Oracle's UTL_FILE package enables PL/SQL procedures to read and write files on the database server's file system. In BigQuery, file operations are handled through Google Cloud Storage. A Cloud Function or Cloud Run service replaces the UTL_FILE operations, reading from and writing to GCS buckets. For common patterns like generating CSV exports, BigQuery's EXPORT DATA statement writes query results directly to GCS without requiring any procedural code.

Date Format String Translation

Oracle and BigQuery use different date format string conventions. Oracle uses format elements like YYYY, MM, DD, HH24, MI, SS. BigQuery uses C-style format specifiers like %Y, %m, %d, %H, %M, %S. The following table maps the most common format elements:

| Oracle Format | BigQuery Format | Description |

|---|---|---|

| YYYY | %Y | 4-digit year |

| YY | %y | 2-digit year |

| MM | %m | Month (01-12) |

| MON | %b | Abbreviated month name |

| DD | %d | Day of month (01-31) |

| HH24 | %H | Hour (00-23) |

| HH or HH12 | %I | Hour (01-12) |

| MI | %M | Minute (00-59) |

| SS | %S | Second (00-59) |

| DAY | %A | Full weekday name |

| DY | %a | Abbreviated weekday name |

| AM / PM | %p | AM/PM indicator |

How MigryX Automates Oracle PL/SQL to BigQuery Migration

MigryX provides a comprehensive Oracle PL/SQL parser that processes DDL scripts, stored procedures, packages, functions, and triggers. The parser builds a complete abstract syntax tree of each PL/SQL unit, identifying every function call, variable reference, cursor definition, exception handler, and control flow structure. This AST powers both the automated translation engine and the data lineage tracking through MigryX Atlas.

MigryX Oracle Parser Capabilities

- Full PL/SQL parsing: Handles procedures, packages, functions, triggers, and anonymous blocks with complete syntax coverage

- Function mapping engine: Automatically translates 300+ Oracle built-in functions to BigQuery equivalents with correct argument ordering and type handling

- Date format translation: Converts Oracle format strings to BigQuery format specifiers automatically

- Cursor loop elimination: Identifies cursor-based row processing and rewrites as set-based SQL operations for optimal BigQuery performance

- CONNECT BY to recursive CTE: Converts hierarchical queries to standard recursive CTEs with proper anchor and recursive member construction

- Package decomposition: Splits Oracle packages into individual BigQuery procedures and functions organized by dataset

- DECODE/NVL translation: Automatically converts Oracle conditional functions to BigQuery CASE/IFNULL/COALESCE equivalents

- Lineage preservation: Tracks column-level data lineage from Oracle source to BigQuery target through MigryX Atlas

- Merlin AI for complex logic: Handles non-deterministic patterns like dynamic SQL, DBMS_SQL, and complex cursor variable usage with AI-assisted translation

MigryX's approach to Oracle migration is fundamentally different from simple find-and-replace transpilers. The parser understands PL/SQL semantics, not just syntax. When it encounters a cursor loop that processes rows individually, it does not simply translate the loop to BigQuery scripting syntax. Instead, it analyzes the loop body, identifies the transformation pattern, and generates an equivalent set-based SQL statement that leverages BigQuery's distributed execution engine. This semantic understanding is what enables MigryX to produce BigQuery code that is not just functionally correct but also performant, following BigQuery best practices for query optimization and resource efficiency.

For enterprise Oracle estates with thousands of PL/SQL objects, MigryX provides a complete migration workflow: automated parsing, translation, validation through parallel run comparison, and lineage documentation. The platform handles the volume that makes manual migration impractical while delivering the accuracy that makes automated migration trustworthy.

Why MigryX Is the Only Platform That Handles This Migration

The challenges described throughout this article are exactly what MigryX was built to solve. Here is how MigryX transforms this process:

- Deep AST parsing: MigryX’s custom-built parsers achieve 95% accuracy on every supported legacy technology — not through approximation, but through true semantic understanding.

- Merlin AI augmentation: Where deterministic parsing reaches its limit, Merlin AI resolves ambiguities and implicit behaviors, pushing accuracy to 99%.

- Complete coverage: MigryX supports 25+ source technologies including SAS, Informatica, DataStage, SSIS, Alteryx, Talend, ODI, Teradata, and Oracle PL/SQL.

- End-to-end automation: From parsing to conversion to validation — MigryX automates the entire pipeline, not just one step.

MigryX combines precision AST parsing with Merlin AI to deliver 99% accurate, production-ready migration — turning what used to be a multi-year manual effort into a streamlined, validated process. See it in action.

Ready to Migrate Your Oracle PL/SQL to BigQuery?

MigryX parses your Oracle procedures, packages, and functions, generating production-ready BigQuery SQL with complete lineage tracking. See it working on your own codebase.

Schedule a Demo